Note

Access to this page requires authorization. You can try signing in or changing directories.

Access to this page requires authorization. You can try changing directories.

This article shows you how to add Confluent Cloud for Apache Kafka source to an eventstream.

Confluent Cloud for Apache Kafka is a streaming platform offering powerful data streaming and processing functionalities using Apache Kafka. By integrating Confluent Cloud for Apache Kafka as a source within your eventstream, you can seamlessly process real-time data streams before routing them to multiple destinations within Fabric.

Prerequisites

Access to a workspace in the Fabric capacity license mode (or) the Trial license mode with Contributor or higher permissions.

A Confluent Cloud for Apache Kafka cluster and an API Key.

Your Confluent Cloud for Apache Kafka cluster should be publicly accessible and not be behind a firewall or secured in a virtual network. If it resides in a protected network, connect to it by using Eventstream connector virtual network injection.

If you plan to use TLS/mTLS settings, make sure the required certificates are available in an Azure Key Vault:

- Import the required certificates into Azure Key Vault in .pem format.

- The user who configures the source and previews data must have permission to access the certificates in the Key Vault (for example, Key Vault Certificate User or Key Vault Administrator).

- If the current user doesn’t have the required permissions, data can’t be previewed from this source in Eventstream.

Launch the Select a data source wizard

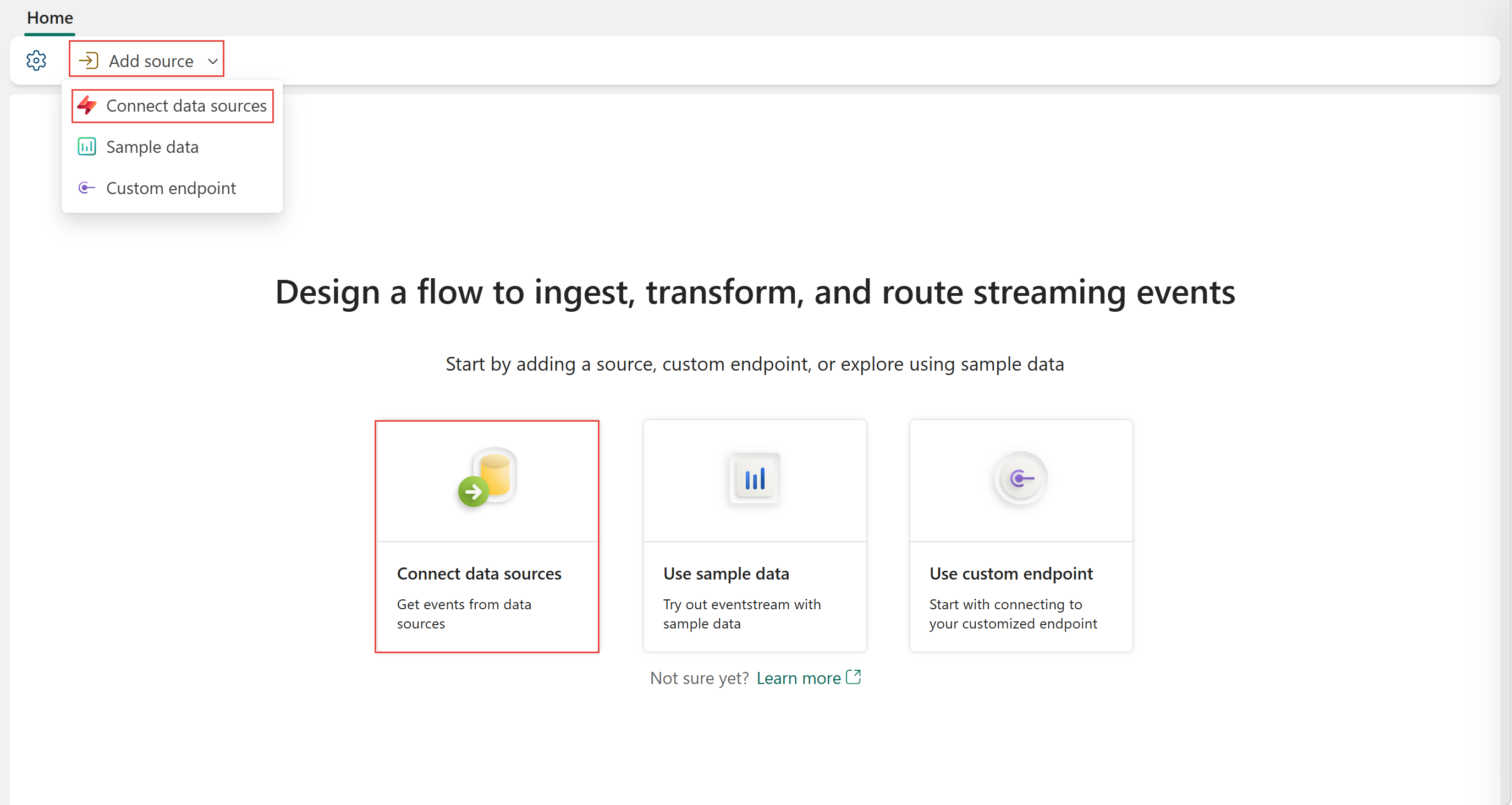

If you haven't added any source to your eventstream yet, select the Connect data sources tile. You can also select Add source > Connect data sources on the ribbon.

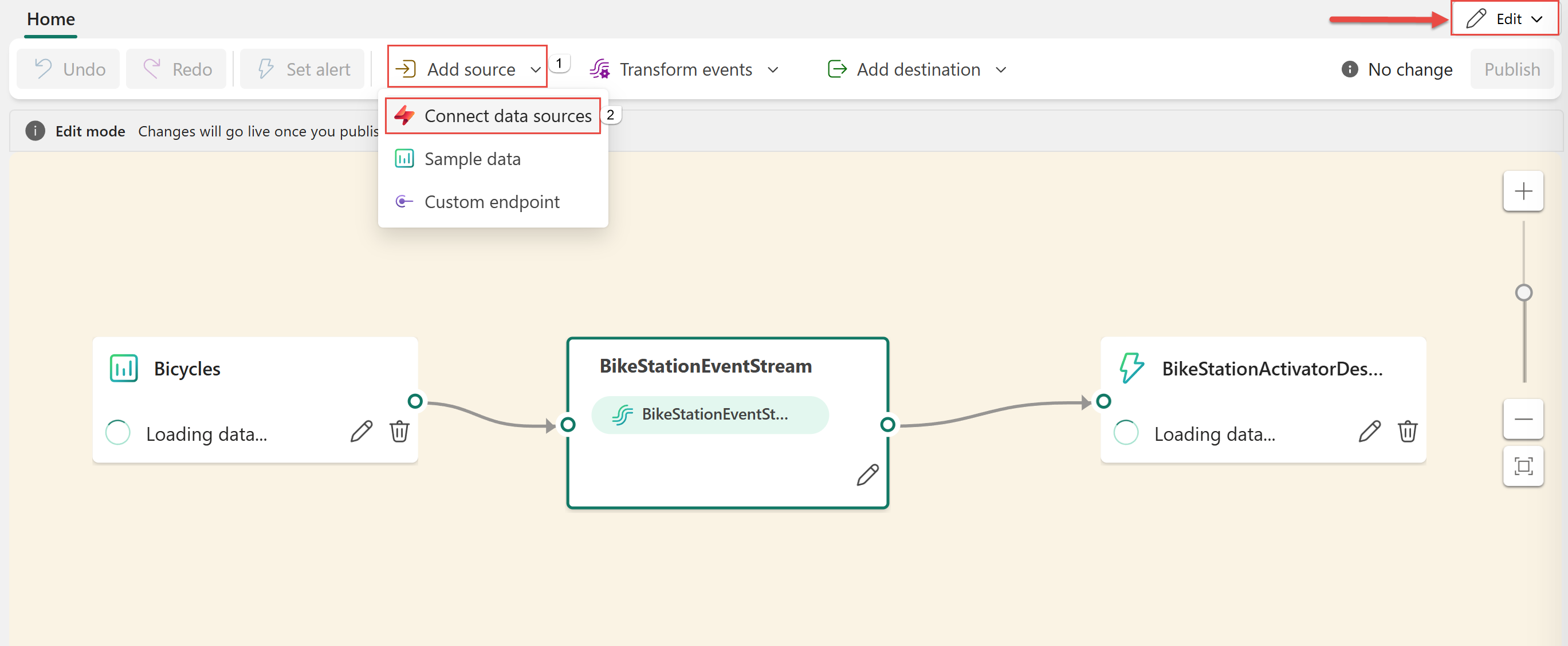

If you're adding the source to an already published eventstream, switch to Edit mode. On the ribbon, select Add source > Connect data sources.

Configure and connect to Confluent Cloud for Apache Kafka

On the Select a data source page, select Confluent Cloud for Apache Kafka.

To create a connection to the Confluent Cloud for Apache Kafka source, select New connection.

In the Connection settings section, enter one or more Confluent Kafka bootstrap server addresses from Cluster Settings on your Confluent Cloud cluster home page. Separate multiple addresses with commas (,).

In the Connection credentials section, If you have an existing connection to the Confluent cluster, select it from the dropdown list for Connection. Otherwise, follow these steps:

- For Connection name, enter a name for the connection.

- For Authentication kind, confirm that Confluent Cloud Key is selected.

- For API Key and API Key Secret:

Navigate to your Confluent Cloud.

Select API Keys on the side menu.

Select the Add key button to create a new API key.

Copy the API Key and Secret.

Paste those values into the API Key and API Key Secret fields.

Note

If you only use mTLS to do the authentication, you can add any string in the Key section during connection creation.

Select Connect

Scroll to see the Configure Confluent Cloud for Apache Kafka data source section on the page. Enter the information to complete the configuration of the Confluent data source.

For Topic name, enter a topic name from your Confluent Cloud. You can create or manage your topic in the Confluent Cloud Console.

For Consumer group, enter a consumer group of your Confluent Cloud. It provides you with the dedicated consumer group for getting the events from Confluent Cloud cluster.

For Reset auto offset setting, select one of the following values:

- Earliest – the earliest data available from your Confluent cluster.

- Latest – the latest available data.

- None – Don't automatically set the offset.

Note

The None option isn't available during this creation step. If a committed offset exists and you want to use None, you can first complete the configuration and then update the setting in the Eventstream edit mode.

If your Kafka cluster requires mTLS, expand TLS/mTLS settings and configure the following options as needed.

When both Trust CA Certificate and Client certificate and key are enabled and configured, the system automatically uses mTLS to establish the connection. No separate security protocol selection is required.- Trust CA Certificate: Enable Trust CA Certificate configuration. Select your subscription, resource group, and key vault, and then provide the server ca name.

- Client certificate and key: Enable Client certificate and key configuration. Select your subscription, resource group, and key vault, and then provide the client certificate name.

Note

The TLS/mTLS settings in this section are currently in preview, including Trust CA Certificate, Client certificate and key, and Additional settings.

For sources in a private network, ensure that the Azure Key Vault containing your certificates is connected to the Azure virtual network used by the streaming virtual network data gateway for Eventstream connector virtual network injection (for example, via a private endpoint).

You may expand Additional settings to configure TLS verify hostname, **TLS cipher suites, and TLS revocation mode:

- TLS verify hostname: Controls whether hostname verification is enabled for the TLS connection. The default value is True.

- TLS cipher suites: Specifies which TLS cipher suites the client can use. The default value is Use system defaults.

- TLS revocation mode: Controls whether client certificate revocation checking is enabled for the TLS connection. The default value is Off.

Stream or source details

On the Connect page, follow one of these steps based on whether you're using Eventstream or Real-Time hub.

Eventstream:

In the Source details pane to the right, follow these steps:

For Source name, select the Pencil button to change the name.

Notice that Eventstream name and Stream name are read-only.

Real-Time hub:

In the Stream details section to the right, follow these steps:

Select the Fabric workspace where you want to create the eventstream.

For Eventstream name, select the Pencil button, and enter a name for the eventstream.

The Stream name value is automatically generated for you by appending -stream to the name of the eventstream. This stream appears on the real-time hub's All data streams page when the wizard finishes.

Select Next at the bottom of the Configure page.

Review and connect

Depending on whether your data is encoded using Confluent Schema Registry:

- If not encoded, select Next. On the Review and create screen, review the summary, and then select Add to complete the setup.

- If encoded, proceed to the next step: Connect to Confluent schema registry to decode data (preview)

Connect to Confluent schema registry to decode data (preview)

Eventstream's Confluent Cloud for Apache Kafka streaming connector is capable of decoding data produced with Confluent serializer and its Schema Registry from Confluent Cloud. Data encoded with this serializer of Confluent schema registry require schema retrieval from the Confluent Schema Registry for decoding. Without access to the schema, Eventstream can't preview, process, or route the incoming data.

You may expand Advanced settings to configure Confluent Schema Registry connection:

Define and serialize data: Select Yes allows you to serialize the data into a standardized format. Select No keeps the data in its original format and passes it through without modification.

If your data is encoded using a schema registry, select Yes when choosing whether the data is encoded with a schema registry.

Use broker TLS certificates: Specifies whether the Kafka broker’s TLS/mTLS certificates are used to secure the connection to the Confluent Schema Registry. Set this option to True when the broker and the Schema Registry use the same certificate configuration.

Then, select New connection to configure access to your Confluent Schema Registry:

- Schema Registry URL: The public endpoint of your schema registry.

- API Key and API Key Secret: Navigate to Confluent Cloud Environment's Schema Registry to copy the API Key and API Secret. Ensure the account used to create this API key has DeveloperRead or higher permission on the schema.

- Privacy Level: Choose from None, Private, Organizational, or Public.

JSON output decimal format: Specifies the JSON serialization format for Decimal logical type values in the data from the source.

- NUMERIC: Serialize as numbers.

- BASE64: Serialize as base64 encoded data.

Select Next. On the Review and create screen, review the summary, and then select Add (Eventstream) or Connect (Real-Time hub).

You see that the Confluent Cloud for Apache Kafka source is added to your eventstream on the canvas in Edit mode. To implement this newly added Confluent Cloud for Apache Kafka source, select Publish on the ribbon.

After you complete these steps, the Confluent Cloud for Apache Kafka source is available for visualization in Live view.

Note

To preview events from this Confluent Cloud for Apache Kafka source, ensure that the API key used to create the cloud connection has read permission for consumer groups prefixed with "preview-". If the API key was created using a user account, no extra steps are required, as this type of key already has full access to your Confluent Cloud for Apache Kafka resources, including read permission for consumer groups prefixed with "preview-". However, if the key was created using a service account, you need to manually grant read permission to consumer groups prefixed with "preview-" in order to preview events.

For Confluent Cloud for Apache Kafka sources, preview is supported for messages in Confluent AVRO format when the data is encoded using Confluent Schema Registry. If the data isn't encoded using Confluent Schema Registry, only JSON formatted messages can be previewed.

Related content

A few other connectors: